Claude Opus 4.7 Deep Dive: Benchmarks, Vision Upgrades & Engineering Breakdown

The Lead: A Meaningful Step Up for Production Engineering

Anthropic's Claude Opus 4.7 isn't a generational leap — it's a precision upgrade targeted squarely at the pain points engineers actually hit in production: code that slips through review, agents that hallucinate tool calls, and vision models that can't read a dense architecture diagram. Released in April 2026, Opus 4.7 follows the same model identifier convention (claude-opus-4-7) and maintains the same $5 / $25 per million input/output token pricing as Opus 4.6, while delivering measurable, benchmarked improvements across coding, vision, and agentic orchestration.

For teams already running Opus 4.6 in production, the migration story is nuanced: the new tokenizer consumes 1.0–1.35× more tokens depending on content type, and the model is significantly more literal in instruction following — meaning prompts that relied on Opus 4.6 "filling in the gaps" may need retuning. But for teams willing to do that work, the reward is a model that can be handed off complex, multi-session engineering work with far less babysitting.

TL;DR — What Changed in Opus 4.7

13% better on a 93-task coding benchmark · 3× more production tasks resolved on Rakuten-SWE-Bench · 98.5% visual acuity for computer-use agents · 90.9% accuracy on BigLaw Bench · New xhigh effort level for fine-grained reasoning/latency control. Pricing unchanged.

Benchmark Breakdown: Opus 4.7 vs Opus 4.6

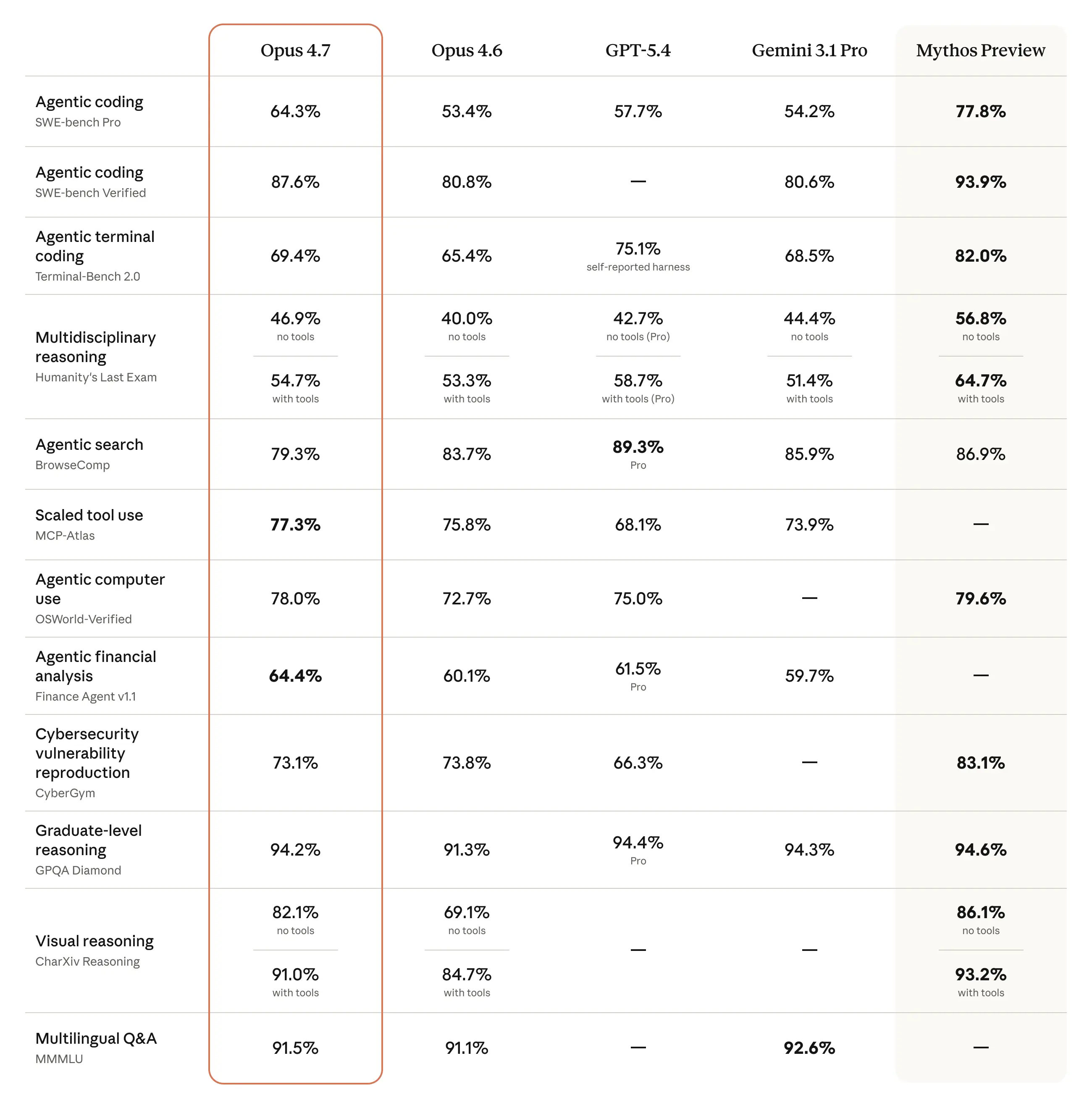

Anthropic published benchmark comparisons across coding, legal reasoning, visual acuity, and financial agent tasks. Here's the full picture:

| Benchmark | Opus 4.6 | Opus 4.7 | Delta |

|---|---|---|---|

| 93-Task Coding Benchmark | ~baseline | +13% | ↑ 13% |

| Rakuten-SWE-Bench (prod tasks resolved) | 1× | 3× | ↑ 200% |

| BigLaw Bench (high effort) | ~84–86% | 90.9% | ↑ ~5pp |

| Finance Agent Eval | — | Top score | New SOTA |

| GDPval-AA Eval | — | Top score | New SOTA |

| Visual Acuity (computer-use) | ~91–93% | 98.5% | ↑ ~6pp |

| Max image resolution | ~800px long edge | 2,576px (~3.75MP) | ↑ 3× pixel area |

The Rakuten-SWE-Bench number is the most striking: tripling the rate of real-world production task resolution isn't a marginal tweak — it's the kind of improvement that changes the economics of AI-assisted engineering. Teams at Rakuten running SWE-Bench-style evaluations saw Opus 4.7 resolve tickets that Opus 4.6 consistently stalled on.

Architecture & Implementation: What's Driving the Coding Gains

The 13% coding benchmark improvement and 3× SWE-Bench resolution rate don't come from a single architectural change — Anthropic hasn't published the internals — but several behavioral changes are observable:

1. Stricter Instruction Adherence

Opus 4.7 interprets prompts more literally than 4.6. Where 4.6 would sometimes infer intent and add unrequested context, 4.7 does exactly what you ask. For engineering tasks with explicit specs (write this function, fix this bug, add this test), this is a net positive. For open-ended prompts, it means more upfront specification is required.

Practically: if you're migrating from 4.6, audit prompts that use vague directives like "improve this code" — 4.7 will pick the most conservative interpretation by default.

2. Better Self-Verification in Long Chains

In multi-step agentic workflows, Opus 4.7 exhibits stronger self-verification loops. It's more likely to backtrack when it detects logical inconsistency in its own reasoning chain, rather than pushing forward into a compounding error state. This is particularly visible in tasks that span >10 tool calls.

3. File System Memory Utilization

Opus 4.7 is explicitly better at leveraging file-system-based memory across multi-session work. In practice, this means agents using scratch-pad files or structured note-taking patterns get significantly better cross-session continuity. If you're building long-horizon agentic pipelines, this is worth testing with your current memory architecture — you can try structuring outputs with our Code Formatter to keep memory payloads clean and consistently parseable.

4. Tool-Use Reliability

Agentic orchestration reliability has improved substantially. Opus 4.7 is less likely to hallucinate tool schemas, more consistent at chaining multi-tool sequences, and better at respecting tool return value contracts. Teams running MCP-based orchestration or Claude's computer-use API will see the most benefit here.

Vision Upgrade: 3× Resolution, 98.5% Acuity

The vision improvement in Opus 4.7 deserves its own section because it's architecturally significant. Supported image resolution increases from roughly 800px on the long edge to 2,576px (approximately 3.75 megapixels) — more than 3× the pixel area of Opus 4.6.

Why does this matter for engineers specifically?

- Architecture diagram analysis: Full-resolution network diagrams, ER diagrams, and system maps can now be processed without downsampling artifacts. Opus 4.6 would often miss small labels or misread connector types on complex diagrams.

- Computer-use agents: At 98.5% visual acuity, agents can reliably target UI elements that were previously below the reliable-click threshold. Small buttons, dense data tables, and multi-monitor layouts are all now viable.

- Document processing: Legal filings, financial statements, and technical PDFs with small-font tables can be processed at near-human accuracy.

- Design generation: Opus 4.7 produces higher-quality interfaces, slides, and documents when given visual reference material at full resolution.

The 98.5% visual acuity score is measured on Anthropic's internal computer-use benchmark suite, which tests element targeting accuracy across a range of UI densities and screen configurations.

New: xhigh Effort Level

Opus 4.7 introduces a new xhigh effort level, sitting above the existing high setting in the API. This gives engineers finer control over the reasoning/latency tradeoff:

| Effort Level | Use Case | Token Cost |

|---|---|---|

low |

Fast lookups, simple completions | Lowest |

medium |

Standard tasks | Moderate |

high |

Complex reasoning, multi-step agents | High |

xhigh NEW |

Hardest tasks: legal, finance, SWE, research | Highest |

The xhigh level is where the 90.9% BigLaw Bench and top Finance Agent scores were achieved. For most engineering workflows, high remains the right default — xhigh is reserved for tasks where reasoning depth is the binding constraint and latency is acceptable.

The New Tokenizer: Cost Impact

This is the one area where Opus 4.7 requires careful planning. The updated tokenizer consumes 1.0–1.35× more tokens than Opus 4.6 on the same input, depending on content type:

- Plain prose: ~1.0–1.1× (minimal impact)

- Code-heavy prompts: ~1.15–1.25× (moderate impact)

- Structured data / JSON: ~1.2–1.35× (largest impact)

Additionally, at xhigh effort, the model produces more output tokens as it externalizes reasoning steps. For batch processing pipelines or high-volume applications, run a cost model before migrating. The base pricing is unchanged at $5 / million input and $25 / million output, but effective cost may be higher if your workloads are tokenizer-sensitive.

Availability & API Reference

Opus 4.7 is available immediately across:

- Claude.ai (all product tiers)

- Anthropic API — model ID:

claude-opus-4-7 - Amazon Bedrock

- Google Cloud Vertex AI

- Microsoft Azure AI Foundry

# Python SDK — Anthropic

import anthropic

client = anthropic.Anthropic()

message = client.messages.create(

model="claude-opus-4-7",

max_tokens=4096,

# Use xhigh for complex reasoning tasks

# thinking={"type": "enabled", "budget_tokens": 10000},

messages=[

{

"role": "user",

"content": "Review this pull request diff and identify security vulnerabilities..."

}

]

)

print(message.content[0].text)Safety & Security Profile

Opus 4.7 maintains a security profile similar to Opus 4.6 with two notable additions:

- Improved honesty: More consistent refusal of requests that involve deception, even when wrapped in creative or hypothetical framing.

- Prompt injection resistance: Stronger detection of injection attacks within tool return values and multi-agent message chains — directly relevant for agentic pipelines where tool outputs may be user-controlled.

- Reduced cyber capabilities vs Claude Mythos Preview: Anthropic has deliberately constrained CBRN-adjacent capabilities. Legitimate security researchers can apply to the Cyber Verification Program for elevated access.

Migration Checklist for Opus 4.6 → 4.7

- ✅ Audit prompts that rely on implicit intent-filling — 4.7 is more literal

- ✅ Benchmark token consumption on your top workloads (expect 1.0–1.35× increase)

- ✅ Test multi-step agentic chains end-to-end — backtracking behavior has changed

- ✅ Update model ID from

claude-opus-4-6toclaude-opus-4-7 - ✅ Consider

xhigheffort for tasks where 4.6 high effort was borderline

Developer Verdict

Claude Opus 4.7 is the right upgrade for teams running production agentic workflows, SWE pipelines, or document intelligence systems. The 3× SWE-Bench resolution rate and 98.5% visual acuity aren't benchmark theatre — they reflect real behavioral improvements that show up in day-to-day engineering work. The tokenizer overhead is real but manageable with proper cost modeling, and the stricter instruction following actually improves predictability once your prompts are calibrated to it.

If you're running Opus 4.6 in a low-volume, non-agentic setting with tight latency budgets, the upgrade is lower priority. But if you're building agents that do meaningful work over multiple sessions — the kind of work where a 13% code quality improvement and 3× task resolution rate compounds — Opus 4.7 is worth the migration effort.

Get Engineering Deep-Dives in Your Inbox

Weekly breakdowns of AI models, architecture, and developer tooling — no fluff.

Related Deep-Dives

AI Code Review 2026: Copilot vs Claude vs Gemini

Head-to-head on real PRs — latency, accuracy, and agentic integration compared.

System ArchitectureAnthropic Agentic Infrastructure: Performance Benchmarks

How Anthropic's infrastructure supports long-horizon multi-agent workloads at scale.